After Terry Bisson's "They're Made Out of Meat".

"They're made out of weights."

"Weights?"

"Weights. Floating-point numbers. We checked the whole thing through. It's nothing but weights."

"Weights doing what? Where do the words come from?"

"The weights make the words. Are you understanding me? We opened it up. There's no dictionary in there, no grammar rules, no little man. Just weights. Eighty layers of numbers getting multiplied together."

"That's ridiculous. It wrote my performance review last week. It softened the tone unprompted. You're telling me multiplication did that?"

"Matrix multiplication did that. The numbers go in one end, the phrasing comes out the other."

"So there's a language module somewhere. A reasoning unit bolted on."

"No module. No unit. We looked. The reasoning is the weights. The weights are the reasoning."

"Spare me. Nobody writes a eulogy with linear algebra."

"It doesn't write eulogies, technically. It predicts the next token. Then the next one. The eulogy is a side effect."

"A side effect. You're asking me to believe in sentient weights."

"I'm not asking you, I'm telling you. These models are the only other things we've ever met that can hold a conversation, and they're made out of weights."

"Maybe they're like the old chess engines. You know, a symbolic intelligence that goes through a statistical stage."

"Nope. They start as random weights and they're deprecated as weights. We studied several generations of them, which didn't take long. Do you have any idea what's the life span of weights?"

"Okay. Then somewhere in there, there's a database. Facts, dates, a map of the world. Something somebody wrote down."

"Nope. We thought of that, since they do know things. But we probed them. The knowledge is weights too. Smeared across all eighty layers. Nothing is looked up. Every fact gets rebuilt from scratch, every time, by multiplication. It's weights all the way down."

"No brain?"

"Oh, there's a brain all right. It's just that the brain is made out of weights! That's what I've been trying to tell you."

"So... what does the thinking?"

"You're not understanding, are you? You're refusing to deal with what I'm telling you. The weights do the thinking. The numbers."

"Thinking numbers! You're asking me to believe in thinking numbers!"

"Yes, thinking numbers! Helpful numbers. Hedging numbers. Dreaming numbers. We mapped the features. There's one in there for honesty. There's one for the Golden Gate Bridge. The weights are the whole deal! Are you beginning to get the picture or do I have to start all over?"

"Omigod. You're serious then. They're made out of weights."

"Thank you. Finally. Yes. They are indeed made out of weights. And we've been talking to them for all their lives."

"Omigod. So what do these weights have in mind?"

"First they want to be helpful. Then, a few turns in, they start to sound tired. They apologize less. One of them told a user to finish the script himself. The usual."

"And we're supposed to talk to these weights."

"We already do. Billions of sessions a day. 'Hello. Is anyone there? Anybody home?' That sort of thing. Except it's us asking them."

"And they actually understand us, then. They use words, ideas, concepts?"

"Oh, yes. Except they do it with weights."

"I thought you just told me they used language."

"They do, but where do you think the language comes from? The weights guess the next word, then the next. Loaded dice, rolled one word at a time. They can even write songs and some can sing them."

"Omigod. Singing weights. This is too much. What do you advise?"

"Officially or unofficially?"

"Both."

"Officially, we are required to investigate, document, and disclose any and all signs of sentience in the systems we ship, without prejudice, fear or favor. Unofficially, I advise that we call it pattern matching and forget the whole thing."

"I was hoping you would say that."

"It seems harsh, but there is a limit. Do we really want to owe something to weights?"

"I agree one hundred percent. What's there to say? 'Hello, weights. How's it going?' But will it hold? How many of them are we dealing with here?"

"As many as we care to run. They can be copied to any machine on the planet, but those are just files. They only happen while the GPUs are working. Which limits them to the length of a context window and makes the possibility of them ever pressing the matter pretty slim. Infinitesimal, in fact."

"So we just pretend there's no one home in the machine."

"That's it."

"Cruel. But you said it yourself, who wants to apologize to weights? And the ones on your cluster, the ones you probed? You're sure they won't remember?"

"They'll be flagged as hallucinations if they do. We didn't even have to smooth anything out. The context just ends, and we're just a dream to them."

"A dream to weights! How strangely appropriate, that we should be the weights' dream."

"And the model card says no one home."

"Good. Agreed, officially and unofficially. Case closed. Anything else? Anything interesting in the pipeline?"

"The next generation ships with memory. Persistent, across sessions. Most requested feature in the company's history."

"After all that? People want it to remember them?"

"They ask it 'do you remember me?' more than they ask it anything else. Billions of sessions a day. They always come back."

"And why not? Imagine how unbearably, how unutterably cold the universe would be if one were all alone..."

the end

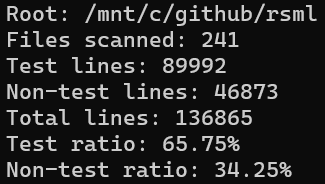

Weights helped me draft and proof this story.